Diffusion Models - Live Coding Tutorial

This is my live (to the most extent) coding video, where I implement from a scratch a diffusion model that generates 32 x 32 RGB images. The tutorial assumes a basic knowledge of deep learning and Python.

Links:

The Jupiter notebook built in this video: https://github.com/dtransposed/code_v...

My website: https://dtransposed.github.io

My Twitter: / dtransposed

Sources:

Lil' Log What are Diffusion Models: https://lilianweng.github.io/posts/20...

Understanding Diffusion Models: A Unified Perspective: https://arxiv.org/abs/2208.11970

Denoising Diffusion Probabilistic Models: https://arxiv.org/abs/2006.11239

Timestamps:

0:00 Introduction

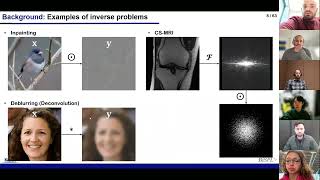

0:32 Theoretical background

13:13 Live Coding Forward diffusion

41:29 Live Coding Training loop

1:00:05 Live Coding Overfitting one batch

1:03:36 Live Coding Reverse diffusion

1:13:40 Live Coding Training on CIFAR 10 dataset

1:17:24 Live Coding Result evaluation

1:19:40 (Bonus) Quick explanation of the UNet architecture used in the tutorial