Mamba - a replacement for Transformers?

Mamba is a new neural network architecture proposed by Albert Gu and Tri Dao.

Timestamps:

00:00 Mamba a replacement for Transformers?

00:19 The Long Range Arena benchmark

01:20 Legendre Memory Units

02:07 HiPPO: Recurrent Memory with Optimal Polynomial Projections

02:38 Combining Recurrent, Convolutional and Continuoustime Models with Linear StateSpace Layers

03:28 Efficiently Modeling Long Sequences with Structured State Spaces (S4)

05:46 The Annotated S4

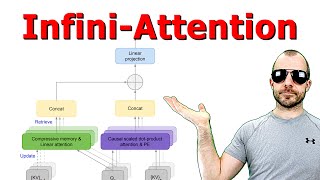

06:13 Mamba: LinearTime Sequence Modeling with Selective State Spaces

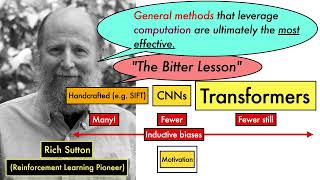

07:42 Motivation: Why selection is needed

09:59 S5

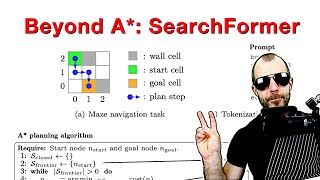

12:00 Empirical evaluation

The paper can be found here: https://arxiv.org/abs/2312.00752

Topics: #mamba #foundation

References for papers mentioned in the video can be found at

https://samuelalbanie.com/digests/202...

For related content:

Twitter: / samuelalbanie

personal webpage: https://samuelalbanie.com/

YouTube: / @samuelalbanie1

![The moment we stopped understanding AI [AlexNet]](https://i.ytimg.com/vi/UZDiGooFs54/mqdefault.jpg)