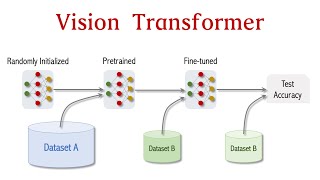

Vision Transformer Basics

An introduction to the use of transformers in Computer vision.

Timestamps:

00:00 Vision Transformer Basics

01:06 Why Care about Neural Network Architectures?

02:40 Attention is all you need

03:56 What is a Transformer?

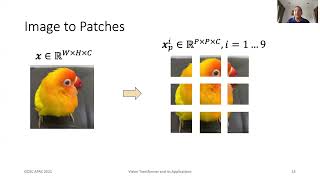

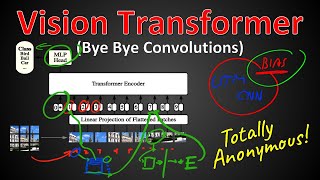

05:16 ViT: Vision Transformer (EncoderOnly)

06:50 Transformer Encoder

08:04 SingleHead Attention

11:45 MultiHead Attention

13:36 MultiLayer Perceptron

14:45 Residual Connections

16:31 LayerNorm

18:14 Position Embeddings

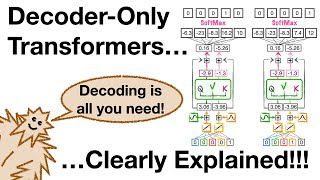

20:25 Cross/Causal Attention

22:14 Scaling Up

23:03 Scaling Up Further

23:34 What factors are enabling effective further scaling?

24:29 The importance of scale

26:04 Transformer scaling laws for natural language

27:00 Transformer scaling laws for natural language (cont.)

27:54 Scaling Vision Transformer

29:44 Vision Transformer and Learned Locality

Topics: #computervision #ai #introduction

Notes:

This lecture was given as part of the 2022/2023 4F12 course at the University of Cambridge.

It is an update to a previous lecture, which can be found here: • Neural network architectures, scaling...

Links:

Slides (pdf): https://samuelalbanie.com/files/diges...

References for papers mentioned in the video can be found at

http://samuelalbanie.com/digests/2023...

For related content:

Twitter: / samuelalbanie

personal webpage: https://samuelalbanie.com/

YouTube: / @samuelalbanie1

![The moment we stopped understanding AI [AlexNet]](https://i.ytimg.com/vi/UZDiGooFs54/mqdefault.jpg)

![[ 100k Special ] Transformers: Zero to Hero](https://i.ytimg.com/vi/rPFkX5fJdRY/mqdefault.jpg)