Sub4Sub network gives free YouTube subscribers

Vision Transformers: Using transformer neural network architecture with images - Data Hub Tech Talk

Presenter: Lucas Sheneman, University of Idaho, Director Research Computing and Data Services

Presented: 4/16/2024

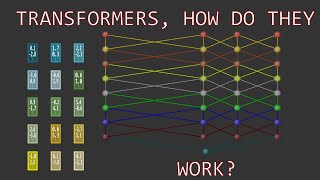

The transformer architecture is the basis for ChatGPT and other large language models. Transformers can be used with computer vision tasks by serializing images into sequences of patches. This architecture is arguably superior to traditional convolutional neural networks (CNNs) in several ways. We will build and test a Vision Transformer (ViT) from scratch in Python and demonstrate powerful, existing ViT Python libraries.

Recommended